The provider architecture should make it much easier to get a fully customized, yet consistent runtime with the right set of Python dependencies.īut that’s not all: you can write your own custom providers and add things like custom connection types, customizations of the Connection Forms, and extra links to your operators in a manageable way. Other providers are automatically installed when you choose appropriate extras when installing Airflow. Some of the common providers are installed automatically (ftp, http, imap, sqlite) as they are commonly used.

#Airflow 2.0 github plus#

Now you can create a custom Airflow installation from “building” blocks and choose only what you need, plus add whatever other requirements you might have. Each provider package is for either a particular external service (Google, Amazon, Microsoft, Snowflake), a database (Postgres, MySQL), or a protocol (HTTP/FTP). We’ve split Airflow into core and 61 (for now) provider packages.

#Airflow 2.0 github code#

These changes have removed over three thousand lines of code from the KubernetesExecutor, which makes it run faster and creates fewer potential errors.ĭocs on pod_override Airflow core and providers: Splitting Airflow into 60+ packages:Īirflow 2.0 is not a monolithic “one to rule them all” package. We have also replaced the executor_config dictionary with the pod_override parameter, which takes a Kubernetes V1Pod object for a1:1 setting override. yaml pod_template_file instead of specifying parameters in their airflow.cfg.

#Airflow 2.0 github full#

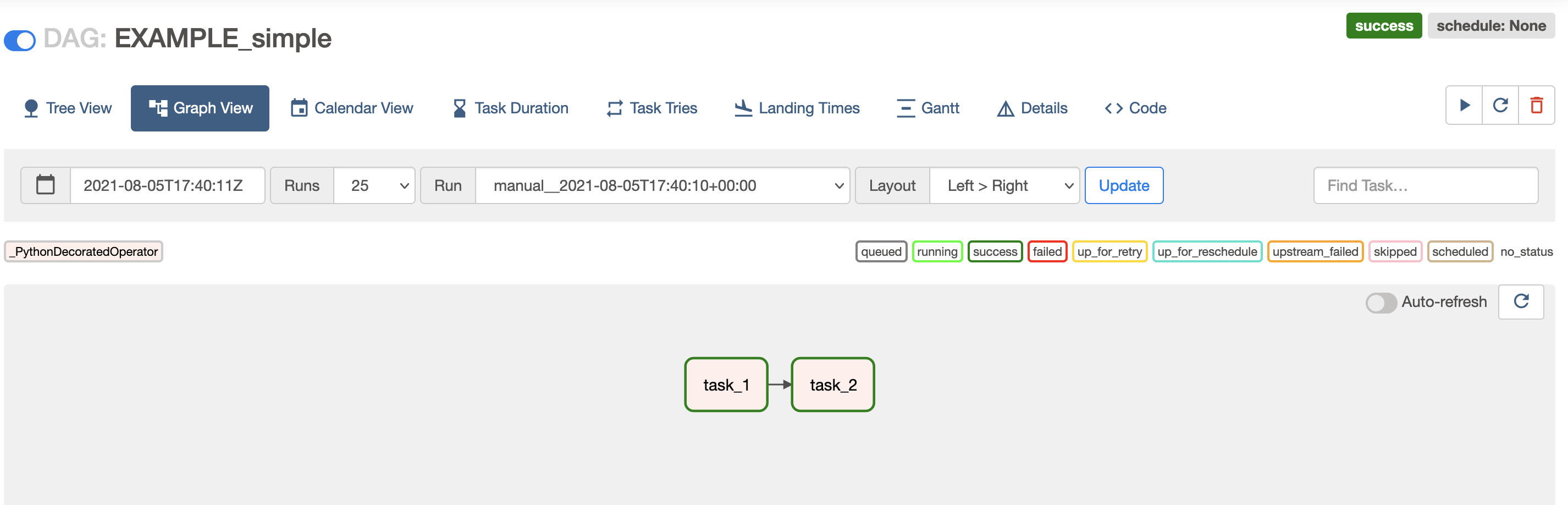

Users will now be able to access the full Kubernetes API to create a. Simplified KubernetesExecutorįor Airflow 2.0, we have re-architected the KubernetesExecutor in a fashion that is simultaneously faster, easier to understand, and more flexible for Airflow users. Read more about it in the Smart Sensors documentation. This feature is in “early-access”: it’s been well-tested by Airbnb and is “stable”/usable, but we reserve the right to make backwards incompatible changes to it in a future release (if we have to. To improve this, we’ve added a new mode called “Smart Sensors”. If you make heavy use of sensors in your Airflow cluster, you might find that sensor execution takes up a significant proportion of your cluster even with “reschedule” mode. Smart Sensors for reduced load from sensors (AIP-17) We have also added an option to auto-refresh task states in Graph View so you no longer need to continuously press the refresh button :).Ĭheck out the screenshots in the docs for more. We’ve given the Airflow UI a visual refresh and updated some of the styling. If you find an example where this isn’t the case, please let us know by opening an issue on GitHubįor more information, check out the Task Group documentation. SubDAGs will still work for now, but we think that any previous use of SubDAGs can now be replaced with task groups.

SubDAGs were commonly used for grouping tasks in the UI, but they had many drawbacks in their execution behaviour (primarily that they only executed a single task in parallel!) To improve this experience, we’ve introduced “Task Groups”: a method for organizing tasks which provides the same grouping behaviour as a subdag without any of the execution-time drawbacks. There’s no config or other set up required to run more than one scheduler-just start up a scheduler somewhere else (ensuring it has access to the DAG files) and it will cooperate with your existing schedulers through the database.įor more information, read the Scheduler HA documentation.

To fully use this feature you need Postgres 9.6+ or MySQL 8+ (MySQL 5, and MariaDB won’t work with more than one scheduler I’m afraid). This is super useful for both resiliency (in case a scheduler goes down) and scheduling performance. It’s now possible and supported to run more than a single scheduler instance. Over at Astronomer.io we’ve benchmarked the scheduler-it’s fast (we had to triple check the numbers as we don’t quite believe them at first!) Scheduler is now HA compatible (AIP-15) Massive Scheduler performance improvementsĪs part of AIP-15 (Scheduler HA+performance) and other work Kamil did, we significantly improved the performance of the Airflow Scheduler. We now have a fully supported, no-longer-experimental API with a comprehensive OpenAPI specification From corators import dag, task from import days_ago ( default_args = () def load ( total_order_value : float ): print ( "Total order value is: %.2f " % total_order_value ) order_data = extract () order_summary = transform ( order_data ) load ( order_summary ) tutorial_etl_dag = tutorial_taskflow_api_etl () Fully specified REST API (AIP-32)

0 kommentar(er)

0 kommentar(er)